Codetown

Codetown ::: a software developer's community

What's the difference between Grid computing and Cloud Computing

I don't clearly catch the difference betwenn these two concept. Someone told me that the essential différence is that the cloud computing give you a large space of storage and the grig give more advantages than storage, we can profit to much power with this last.

Does any one know more clearly these two concept; and tell us?

Tags:

Replies to This Discussion

-

Permalink Reply by Thomas Michaud on October 26, 2011 at 3:38pm

-

I don't claim to be the expert, but the difference is (I think) in use.

Grid represents a scalable framework. You write your algorithm and your code and use as much computing power as you wallet can afford. (Useful as some work can be highly parallelizable) .

Cloud computing offers storage (true) but it's also represents the applications as well. Ideally with cloud computing, you don't need to have certain applications on your desktop - as long as you can hit the cloud, you can get, update, and use your data.

-

-

Permalink Reply by Hervé-greg MOKWABO on October 26, 2011 at 3:49pm

-

Thanks thomas;

What I got :

Grid - much computing power and can be highly parallelizable

Cloud - Storage and dont need to have certain applications on your desktop ( that's just like server application?)

Someone can tell us more?

-

-

Permalink Reply by Bradlee Sargent on October 27, 2011 at 10:58pm

-

I think if you look at the history, you will understand some difference.

In my own experience, the grid began with Oracle using it as a type of metadatabase, which would point to multiple databases residing on different but uniform hardware systems. So if a company had multiple unix boxes and needed to increase the size of their database, instead of purchasing additional hardware they could implement the grid database and combine their multiple unix servers into one database resource.

Cloud is much more in terms of it offering not only a database, but also an entire server including the operating system.

The cloud exposes an operating system, whereas a grid exposes a database.

But I am no buzz word expert so I might be wrong.

-

-

Permalink Reply by Jackie Gleason on October 28, 2011 at 10:17am

-

I just talked to a buddy about this, essentially the Oracle Grid product is differant because it runs the DB in memory. So access times are a lot quicker. I don't think it is really a matter of Vs. so much as Grid computing is a way to handle db transactions in a faster way.

He said their grid servers had something like 72gbs of ram. Freaking crazy

-

-

Permalink Reply by Hervé-greg MOKWABO on November 29, 2011 at 10:33am

-

Please Bradley, wha do you think about Jackie's reaction?

-

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

Presentation: Confidently Automating Changes Across a Diverse Fleet

Netflix engineer Casey Bleifer shares how to achieve rapid, automated code changes across a massive, diverse software fleet. She discusses building an event-driven orchestration platform using composable, Lego-like steps, and explains how Netflix utilizes automated canary validation, compliance checks, and a custom "confidence metric" to eliminate the long tail of manual engineering migrations.

By Casey BleiferIBM Vault Enterprise 2.0 Brings Automated LDAP Secrets Management to Enterprise Identity Security

IBM and HashiCorp have announced new LDAP secrets management capabilities in IBM Vault Enterprise 2.0, introducing a redesigned architecture to manage LDAP credentials, support password rotation, and automate the identity lifecycle.

By Craig RisiMicrosoft Foundry Adds Runtime, Tooling, and Governance for Production Agents

Microsoft used their Build 2026 event to announce new functionality for Microsoft Foundry. Citing Foundry as "the place where AI agents move from experiments to production systems," in a blog post, Nick Brady writes that the release brings “runtime, tools, memory, grounding, models, observability, and governance” that developers need for production agents, rather than just new model endpoints.

By Matt SaundersAWS Releases Next Generation of Amazon OpenSearch Serverless

Amazon Web Services has recently announced the general availability of the next generation of Amazon OpenSearch Serverless, with a redesigned architecture that enables 20 times faster resource provisioning than the previous serverless architecture, true scale-to-zero capability, and up to 60% lower cost than a provisioned cluster for peak loads.

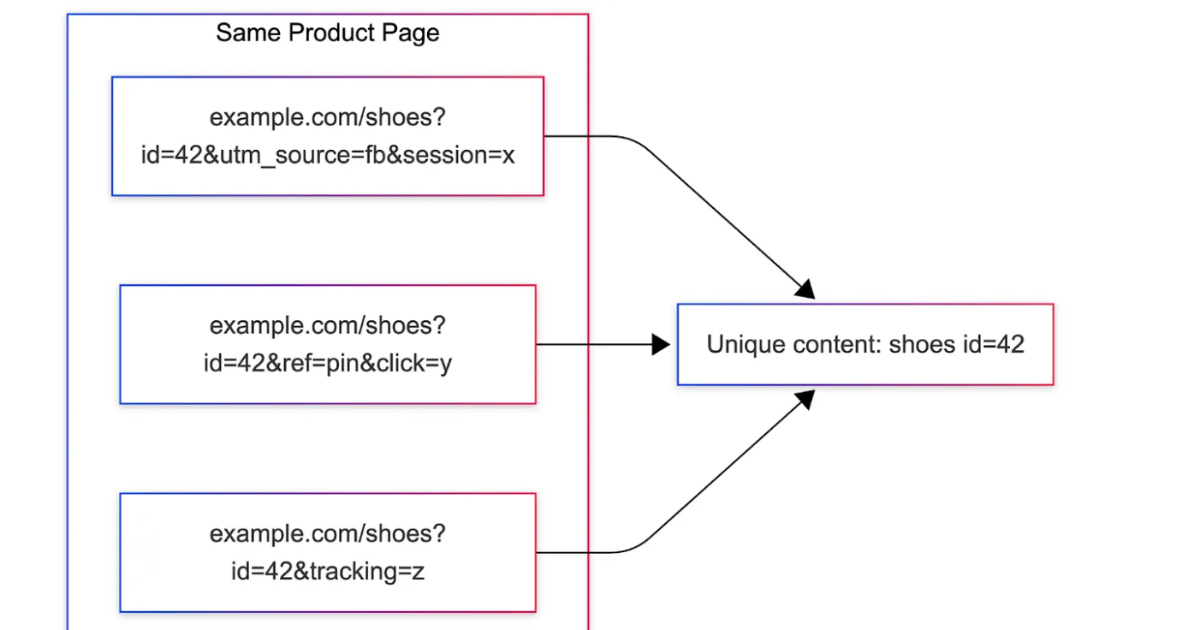

By Gianmarco NalinPinterest Uses Content Fingerprints for URL Deduplication across Millions of Domains

Pinterest introduced MIQPS, a URL normalization system that identifies which query parameters affect page identity using rendered content fingerprints. It reduces duplicate processing across millions of domains by replacing rule-based approaches with offline analysis, anomaly detection, and runtime parameter maps, improving ingestion efficiency and scalability in large-scale content pipelines.

By Leela Kumili

© 2026 Created by Michael Levin.

Powered by

![]()