Codetown

Codetown ::: a software developer's community

What's the difference between Grid computing and Cloud Computing

I don't clearly catch the difference betwenn these two concept. Someone told me that the essential différence is that the cloud computing give you a large space of storage and the grig give more advantages than storage, we can profit to much power with this last.

Does any one know more clearly these two concept; and tell us?

Tags:

Replies to This Discussion

-

Permalink Reply by Thomas Michaud on October 26, 2011 at 3:38pm

-

I don't claim to be the expert, but the difference is (I think) in use.

Grid represents a scalable framework. You write your algorithm and your code and use as much computing power as you wallet can afford. (Useful as some work can be highly parallelizable) .

Cloud computing offers storage (true) but it's also represents the applications as well. Ideally with cloud computing, you don't need to have certain applications on your desktop - as long as you can hit the cloud, you can get, update, and use your data.

-

-

Permalink Reply by Hervé-greg MOKWABO on October 26, 2011 at 3:49pm

-

Thanks thomas;

What I got :

Grid - much computing power and can be highly parallelizable

Cloud - Storage and dont need to have certain applications on your desktop ( that's just like server application?)

Someone can tell us more?

-

-

Permalink Reply by Bradlee Sargent on October 27, 2011 at 10:58pm

-

I think if you look at the history, you will understand some difference.

In my own experience, the grid began with Oracle using it as a type of metadatabase, which would point to multiple databases residing on different but uniform hardware systems. So if a company had multiple unix boxes and needed to increase the size of their database, instead of purchasing additional hardware they could implement the grid database and combine their multiple unix servers into one database resource.

Cloud is much more in terms of it offering not only a database, but also an entire server including the operating system.

The cloud exposes an operating system, whereas a grid exposes a database.

But I am no buzz word expert so I might be wrong.

-

-

Permalink Reply by Jackie Gleason on October 28, 2011 at 10:17am

-

I just talked to a buddy about this, essentially the Oracle Grid product is differant because it runs the DB in memory. So access times are a lot quicker. I don't think it is really a matter of Vs. so much as Grid computing is a way to handle db transactions in a faster way.

He said their grid servers had something like 72gbs of ram. Freaking crazy

-

-

Permalink Reply by Hervé-greg MOKWABO on November 29, 2011 at 10:33am

-

Please Bradley, wha do you think about Jackie's reaction?

-

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

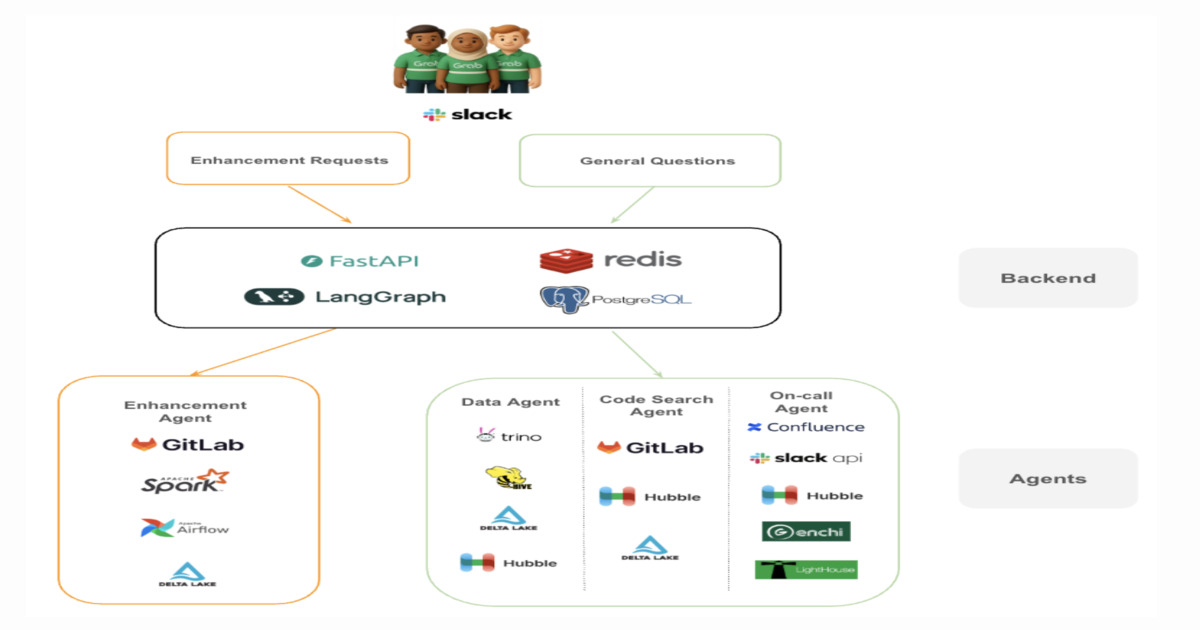

Designing a Multi-Agent System for Engineering Support at Scale: A Case Study From Grab

Grab’s Central Data Team built a multi-agent AI system to automate repetitive engineering support tasks across its data warehouse platform. The system separates investigation and enhancement workflows using specialized agents coordinated via an orchestration layer. It reduces operational load, improves resolution speed, and shifts engineering effort from firefighting to platform engineering work.

By Leela KumiliPresentation: The AI Gateway: Scaling Centralized Inference Across Decentralized Teams

Meryem Arik discusses why modern engineering teams face "inference chaos" and how AI model gateways provide a critical control layer. She explains the balance between empowering decentralized teams to choose the best models and maintaining centralized oversight for security, RBAC, and cost control. Explore open-source solutions like LiteLLM and Doubleword to streamline your AI infra.

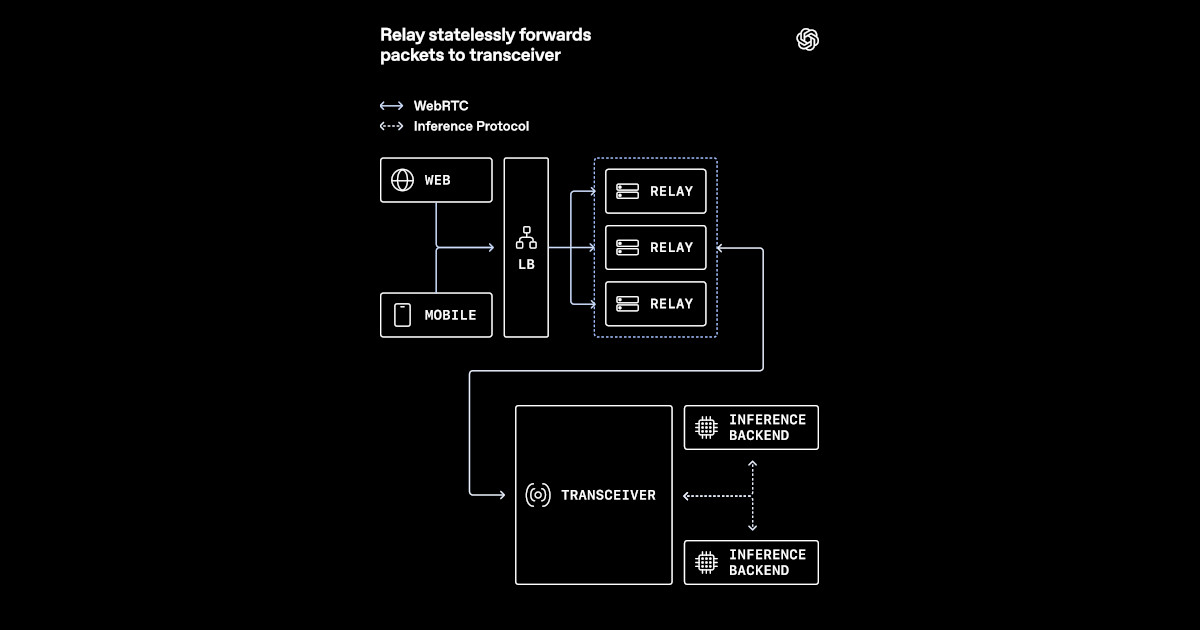

By Meryem ArikOpenAI Outlines WebRTC Architecture for Low-Latency Voice AI at Scale

OpenAI recently outlined how it adapted WebRTC for low-latency voice AI at global scale. The new architecture replaced a conventional media termination model with a relay-transceiver design better suited to Kubernetes and cloud load balancers. It keeps WebRTC session state in a dedicated transceiver layer while using relays to reduce public UDP exposure and keep media routing close to users.

By Eran StillerPip 26.1 Ships Dependency Cooldowns and Experimental Lockfile Support to Combat Supply Chain Attacks

Pip 26.1 ships dependency cooldowns that enforce a waiting period before newly published packages can be installed, and experimental pylock.toml lockfile support from PEP 751. Research shows a 7-day cooldown would have prevented 8 out of 10 analyzed supply chain attacks from reaching end users.

By Steef-Jan WiggersAnthropic Introduces MCP Tunnels for Private Agent Access to Internal Systems

Anthropic has expanded its Claude Managed Agents platform with two enterprise-focused capabilities: self-hosted sandboxes and MCP tunnels. The release aims to address a recurring challenge in enterprise AI deployments, where organizations want to use autonomous agents but cannot allow execution environments or internal systems to leave their security perimeter.

By Robert Krzaczyński

© 2026 Created by Michael Levin.

Powered by

![]()