Codetown

Codetown ::: a software developer's community

The Future of GWT Report

This report on GWT, "The Future of GWT", will be interesting to developers, architects and managers, too. You'll learn details about GWT's usability, its competitors and even opinions as to how it's going to stand up against Dart.

Over 1300 respondents provided data. Overall, GWT is looked upon highly by developers mainly because it targets multiple browsers at once and because it reduces hand coding of Javascript. Slow compile times were a major complaint. These comments are pretty obvious to anyone familiar with GWT, but useful to newcomers. The report digs much deeper though, so experienced developers will learn some things by seeing what a good size survey respondent sample thinks.

Here's a preview of what you'll see in the report:

You'll have to provide your name and email address to get a copy, but I think it's fair since the folks at Vaadin worked hard to provide it along with the other big contributors. And, thanks to Dave Booth for bringing this info to Codetown. If you have questions, Dave's your direct link to the group that put the report together. Check it out here.

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

Arm Open-Sources Metis, an AI Security Framework Outperforming Traditional SAST Tools

Arm has open-sourced Metis, an agentic AI security framework designed to autonomously uncover complex software vulnerabilities. Unlike traditional pattern-based tools, Metis applies semantic reasoning to analyze cross-component dependencies and provides clear, natural language explanations for its findings.

By Sergio De SimoneGoogle Cloud Suspends Railway's Production Account, Causing Eight-Hour Platform-Wide Outage

Google Cloud's automated systems suspended Railway's production account without notice, triggering an eight-hour platform-wide outage affecting 3 million users. The cascade took down workloads across all providers including AWS and bare metal because Railway's control plane was hosted on GCP. Railway is demoting GCP to backup-only status.

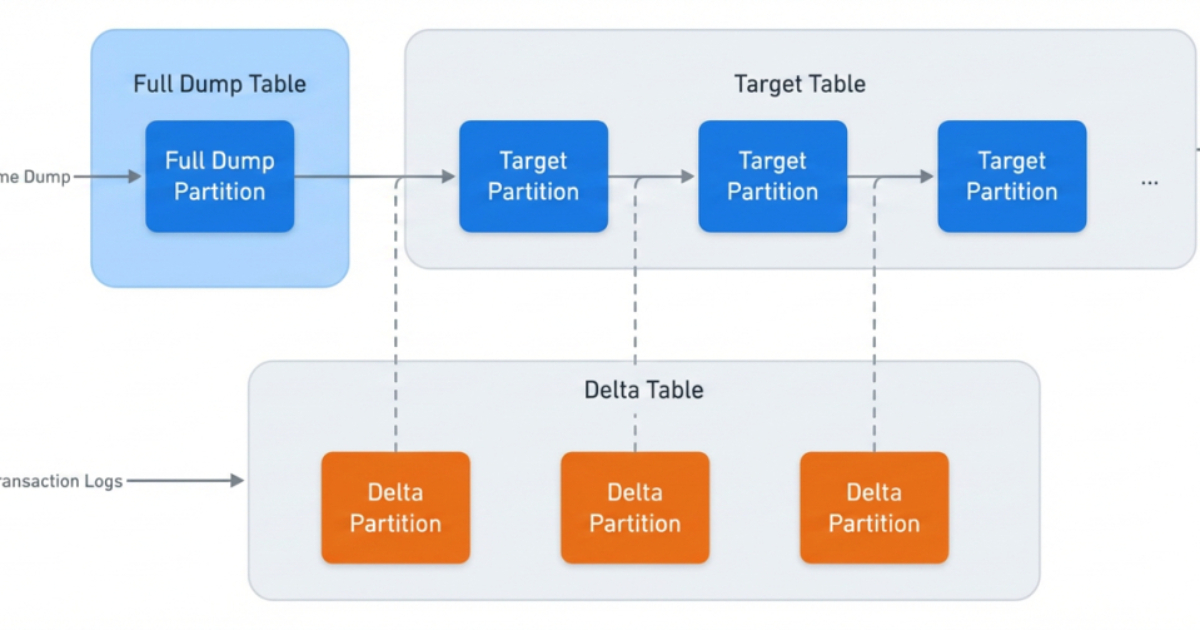

By Steef-Jan WiggersHow Meta Rebuilt Data Ingestion for Petabyte-Scale Reliability

The engineering team at Meta recently outlined how the company migrated a data ingestion platform that transfers several petabytes of MySQL social graph data daily to improve reliability and operational efficiency. The team used techniques like reverse shadowing and continuous checksum monitoring to ensure zero downtime during the transition.

By Renato LosioPresentation: Building Evals for AI Adoption: From Principles to Practice

Mallika Rao discusses the hidden risk of evaluation debt in production AI systems, drawing on her experience at Twitter, Walmart, and Netflix. She explains why traditional metrics fail modern architectures, breaks down a five-layer evaluation stack spanning infrastructure and UX, and shares a diagnostic maturity model to help engineering leaders eliminate silent semantic failures.

By Mallika RaoAI-Assisted Migration Tool Helps Teams Move from ingress-nginx to Higress in Minutes

The Cloud Native Computing Foundation has highlighted a new AI-assisted migration approach that enabled engineers to migrate 60 ingress-nginx resources to Higress in roughly 30 minutes, demonstrating how artificial intelligence is increasingly being applied to modernize Kubernetes networking and gateway infrastructure.

By Craig Risi

© 2026 Created by Michael Levin.

Powered by

![]()

You need to be a member of Codetown to add comments!

Join Codetown