Codetown

Codetown ::: a software developer's community

Small Businesses Can Meet the Challenges of Data Conversion

Implementing new data systems is a difficult process sometimes. New systems result in some amazing benefits including improved processes and the ability to access data more easily, but they are also going to need data to be converted to a format that can be used in the new system. This is a challenge that small businesses must face. This post will look at 5 challenges of data conversion to ensure that you are prepared to conquer them!

Scope of Data

The first step is to define the scope of the data to determine how much of it needs to be converted. You’ll likely find that some of it is essentially useless so there is no need to convert that. Make a list and double check it. How much data is being converted? How much of this data must be converted manually? Determining the scope of data that needs to be converted is a critical step because it allows you to create a plan of action.

Data Sources and Destinations Need to Be Defined

Now you will have to determine exactly where the data is coming from. Are you pulling it from different databases or have you consolidated everything into a single database? You must clearly define the source.

Once you know the source, identify the destination for the data. This will determine exactly what type of conversion is necessary. In some cases, there might be more than one destination so you’ll need to identify what data goes into specific destinations. Write all of this down.

It’s Easy to Get Lost in the Complexity

There is so much data that is accumulated by a business that it’s easy to get lost in a sea of raw data. The sheer intimidation of all this data is what leads to many entrepreneurs to procrastinate updating their systems. They simply don’t want to deal with all of this data conversion.

However, there is a way to face this challenge – data mapping. This is seen by many experts as an essential step to successful data conversion. Detail the requirements for each element of data within the conversion. You’ll have a list by this point to help make this easier. Define all of the following details:

- What will business processes be affected by the change?

- What will the overall transformation look like?

- What new data inputs can you incorporate to meet the needs of the new system?

Every element of the conversion must be documented and mapped out in detail. This includes the estimated time to implement each change.

Determining Everyone’s Roles

This is another challenge that can become a major bottleneck in the overall conversion of data. I’ve seen companies forget to define everyone’s roles during the conversion so they all do their own thing, resulting in an even bigger mess. It’s essential that you detail every team member’s roles before you begin the data conversion process.

- Who will be validating the new data?

- Who will input data into the new system in order to keep the business running?

- Who needs to be locked out of the system until the conversion is finished?

What Resources Are Required?

Finally, you’ll have to make a list of every resource required throughout the data conversion process. Develop a full plan of action from beginning to end including development, testing, and validating new data. Then make sure that you review this plan in detail with all associates involved.

Keeping your systems up-to-date is important because the business world continues to grow at a record pace. If you can meet all of the challenges in this post, then you will find that it’s not quite as intimidating as you believed.

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

ExtendDB: Open Source Amazon DynamoDB Compatible Adapter with Pluggable Storage Backends

AWS recently announced ExtendDB, a DynamoDB-compatible adapter that lets developers use the DynamoDB API with different storage backends, starting with PostgreSQL. The project supports existing SDKs and tools without modification, giving teams greater flexibility to run DynamoDB-style workloads outside of native DynamoDB while maintaining compatibility with current applications and workflows.

By Renato LosioCloudflare Identifies Query Planning Bottleneck in ClickHouse

Cloudflare recently described how a slowdown in its billing pipeline was traced to contention inside the query planning stage of ClickHouse. The team profiled the bottleneck and patched ClickHouse to replace an exclusive lock with a shared lock, drop the per-query copy of the parts list, and improve part filtering.

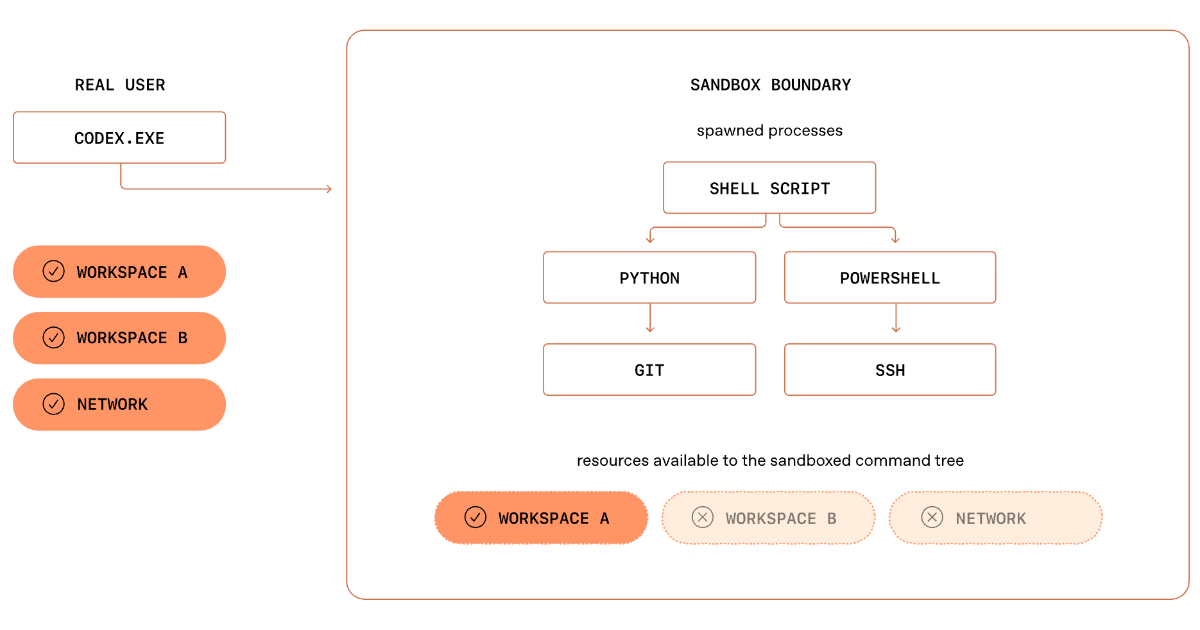

By Renato LosioHow OpenAI Built a Secure Windows Sandbox for Codex Agents

OpenAI details Codex Windows sandbox architecture, showing how SIDs, ACLs, restricted tokens, and dedicated sandbox accounts enable safe execution of autonomous coding tasks. The design balances isolation with real developer workflows and shows how OS security primitives must be composed for AI agents on local development environments.

By Leela KumiliPresentation: Platform Teams Enabling AI - MCP/Multi-Agentic Tools across Linkedin

LinkedIn’s Karthik Ramgopal and Prince Valluri discuss leveraging AI as a new execution model for large-scale engineering. They explain how to move beyond fragmented implementations by building platform abstractions for orchestration, structured context, and safe tooling like MCP. They share architectural insights from real-world coding, observation, and UI testing agents built at LinkedIn.

By Karthik Ramgopal, Prince ValluriHow Netflix Maps Thousands of Microservices in Real-Time

Netflix has shared details about Service Topology. This internal system creates and updates a live dependency graph for thousands of microservices. It helps engineers see how services connect and resolve issues more quickly. The system merges three separate data sources into a single, queryable graph. It updates almost in real-time as traffic patterns shift.

By Claudio Masolo

© 2026 Created by Michael Levin.

Powered by

![]()

You need to be a member of Codetown to add comments!

Join Codetown