Codetown

Codetown ::: a software developer's community

On Objective-C

Is Objective-C a step backwards in terms of memory management? Why does Apple use it despite advances in the language realm? I heard the other day that Java would give people some sort of unfair abilities in the App store. Not sure. But, people I know (Kevin Neelands, for one - check out his best selling Periodic Table of the Elements - free!) tell me Objective-C is agony in terms of pointer arithmetic. Thoughts?

I recently downloaded Scan, a QR-code decoder and Square, a credit card processing app from the App store. These apps are the best of the 21st century, IMHO. What are yours? Scan lets you scan a QR code (above) and it takes you directly to the embedded URL (or whatever - phone number, text, etc...) Square revolutionizes cellphone use for payment processing at less cost than PayPal. GasBuddy is a map based mashup. You'll see variations on these ideas in many apps to come.

I've used an Android phone and didn't like the GUI. Maps are clunky and maps are my favorite iPhone app. Many people tell me that, because I organize JUGs, I should be on an Android phone. I like the best available, so I go with iPhone. Even though I pay nearly $150/mo for my contract (includes one traditional phone at 800 min/mo ea).

Comment

-

Comment by Behr@ng on July 12, 2011 at 7:12am

-

I don't think Apple is intentionally using a complex language to filter out multi-platform programmers. In fact they are working hard on MacRuby to let Ruby programmers write native OS X apps. And they used to have Cocoa bindings for Java as well but it never become so popular so they stopped maintaining it.

Right now, IMHO, all popular OO compiled languages are somehow complex. And I think apart from C++, Objective-C is the only remaining popular compiled language. And IMO Objective-C is easier to learn and more flexible compared to C++.

-

Comment by RBD on July 11, 2011 at 1:51pm

-

When it comes to programming languages, 'better' is a relative term. I think Java and Objective-C are best used for specific purposes.

Objective-C is older, that is true, however it offers dynamic programming (categories, dynamic typing, forwarding) along with message passing, as well as low-level C pointers, in a compiled environment. I would say Objective-C is definitely offers more 'power to the programer' at the language-level to the programmer, at the cost of the programmer has to be more diligent in regards to memory management.

Java is more modern and removes most of pitfalls of memory management from the programmer. Java empowers the programmer via the Java API, which is quite powerful, instead of at the language-level.

If someone has just recently graduated from college, they would easily start coding with Java, since the language-level constructs are simple easy to understand and its API is very powerful.

However, that same person would have a difficult time with Objective-C since it would require vastly more low-level language-level knowledge of the constructs, to understand how to use effectively. Also add to the learning-curve, the need to learn the vast Cocoa API (Core Data, Foundation, Core Graphics, UIKit, etc)

From an engineering point of view, I think Objective-C provides more language-level control and capabilities with a steep learning curve that is not forgiving to multi-platform programmers.

I would guess that Apple will continue to use Objective-C since Apple can use its steep learning curve to filter out multi-platform programmers from the Apple ecosystem.

-

Comment by Behr@ng on July 11, 2011 at 4:25am

-

@Kevin: I think Jobs was referring to Java on the desktop and IMO he is right. How many popular desktop apps are written/being written in Java? Apart from IDEs and developer tools, there are very few popular desktop apps being written in Java.

And I don't know about that particular app of yours, but NetBeans and JDeveloper's UIs are very ugly on OS X. IDEA looks good but does not look like a native app at all and ditto for Eclipse.

-

Comment by Walt Sellers on July 11, 2011 at 3:13am

-

I'm not sure of the history, but a retain count model is at least a step up from C's alloc/free, allowing memory allocation to be controlled by the object.

Garbage collection basically frees the programmer of the work of worrying about memory at the cost of increased work for the execution environment. This has been easy trade-off in the days of fast CPU's and larger sets of RAM on desktops.

The iPhone, however, is a fairly weak device in comparison to any desktop of the last 5 years. The RAM is minuscule and the CPU power low. It is easy to overwhelm the device's resources.

Sure, the memory management may seem like a step back, but its partly because the hardware resources are also a step back... from a desktop.

As for pointer arithmetic, it is still a C-based language underneath. So it is no harder than any other C-based language.

Even for someone who was programming on a Mac OS desktop, there is enough missing in iOS to make work difficult at times.

As for language gripes in general, there are plenty of times when I wonder why I am still editing plain text files to create software? The coding environment has come along some over the years with syntax coloring and code-folding, but it still seems clunky.

-

Comment by Kevin Neelands on July 10, 2011 at 12:26pm

-

Mike has already stated my view on Objective-C - it may have been state of the art 20 years ago but I find it horribly dated and difficult to use now. At work I am working on two versions of the same program - an Objective-C version for the iPad and a Java version for laptops. I cannot see any difference in performance, to my eyes the java version using Swing is better looking that the Objective-C version using Apple's GUI widgets, and I estimate the Java development time to be 1/3 the time spent on the Objective-C version. To add to the frustrations the Java bytecode was developed with RISC architectures like the ARM in mind, and my understanding is now the ARM microprocessor has a Jazelle mode with means approximately 70% of the java bytes codes have a one-to-one correspondence with the ARM op codes so the JVM does very little interpreting. The Android development environment using a Java variant looks quite appealing and I'm trying to get more familiar with it. The main difference is layout managers are largely replaced with an XML based layout system. The other difference is they are careful to explain their Dalvik (?) system is not a JVM per se. - I gather it is more a very simple-minded system to split the few Java opcodes that don't go directly to the ARM into macros of 3-4 opcodes. (I'm speculating a bit).

When I do a web search on the question a quote by Steve Jobs always comes up in which he publicly stated that no one used Java anymore because it had become too heavy and cumbersome. I think he got C++ and Java confused, and my understanding is Mr. Jobs one fatal flaw is his refused to admit mistakes that he made.

Bottom line, if Apple would adopt a Java based development platform like Android has in addition to their Objective-C X-Code IDE it would greatly reduce the man-hours put into iPad apps.

-

Comment by Behr@ng on July 10, 2011 at 9:06am

-

Edit: Since Objective-C 2.0!

-

Comment by Behr@ng on July 10, 2011 at 9:05am

-

I know just a little bit about Objective C and Cocoa. Earlier versions of Cocoa didn't have GC. However since Objective C it's possible to turn on GC if one likes so. But as Kevin has said, it is not as powerful as Java's GC.

However in GC-less mode, Cocoa objects has a couple of methods named retain and release that make memory management more straightforward.

The problem with Java is that it's not a good platform for developing desktops apps in while it probably is the most powerful server-side platform. Desktop Java apps are memory hungry, don't look as beautiful as native apps, and are slow. They don't sell well.

My favorite iOS apps: Things, Kindle, iBooks, Twitterific, River of News, Evernote, Evernote Peek, Instapaper, Dropbox, and 1Password! :D

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

Presentation: Confidently Automating Changes Across a Diverse Fleet

Netflix engineer Casey Bleifer shares how to achieve rapid, automated code changes across a massive, diverse software fleet. She discusses building an event-driven orchestration platform using composable, Lego-like steps, and explains how Netflix utilizes automated canary validation, compliance checks, and a custom "confidence metric" to eliminate the long tail of manual engineering migrations.

By Casey BleiferIBM Vault Enterprise 2.0 Brings Automated LDAP Secrets Management to Enterprise Identity Security

IBM and HashiCorp have announced new LDAP secrets management capabilities in IBM Vault Enterprise 2.0, introducing a redesigned architecture to manage LDAP credentials, support password rotation, and automate the identity lifecycle.

By Craig RisiMicrosoft Foundry Adds Runtime, Tooling, and Governance for Production Agents

Microsoft used their Build 2026 event to announce new functionality for Microsoft Foundry. Citing Foundry as "the place where AI agents move from experiments to production systems," in a blog post, Nick Brady writes that the release brings “runtime, tools, memory, grounding, models, observability, and governance” that developers need for production agents, rather than just new model endpoints.

By Matt SaundersAWS Releases Next Generation of Amazon OpenSearch Serverless

Amazon Web Services has recently announced the general availability of the next generation of Amazon OpenSearch Serverless, with a redesigned architecture that enables 20 times faster resource provisioning than the previous serverless architecture, true scale-to-zero capability, and up to 60% lower cost than a provisioned cluster for peak loads.

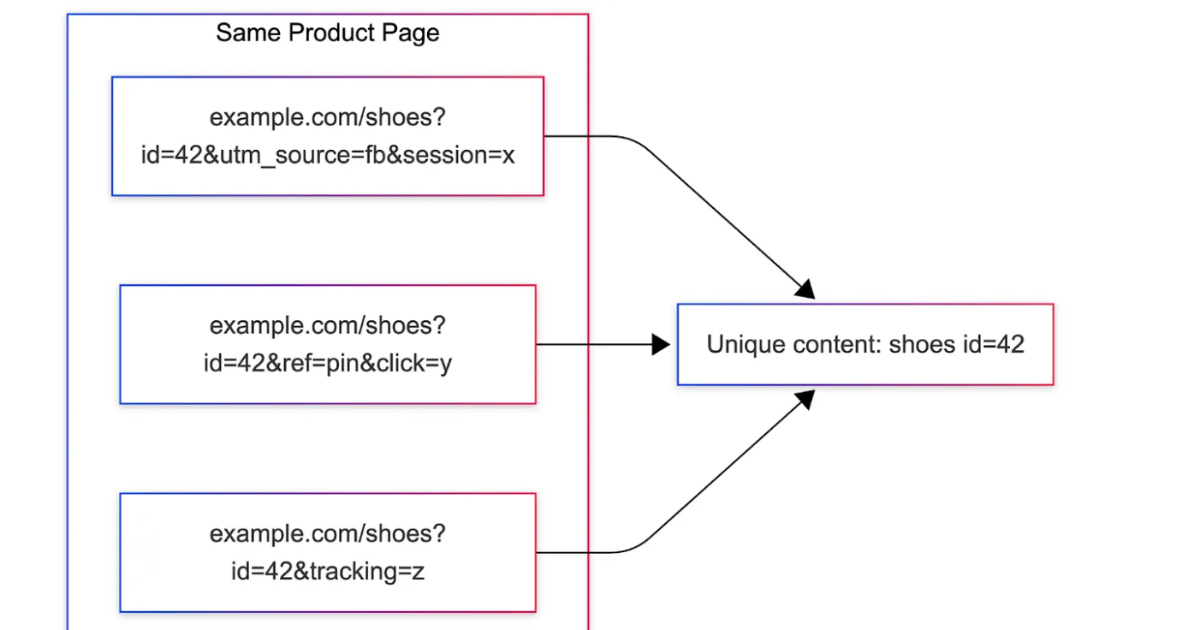

By Gianmarco NalinPinterest Uses Content Fingerprints for URL Deduplication Across Millions of Domains

Pinterest introduced MIQPS, a URL normalization system that identifies which query parameters affect page identity using rendered content fingerprints. It reduces duplicate processing across millions of domains by replacing rule-based approaches with offline analysis, anomaly detection, and runtime parameter maps, improving ingestion efficiency and scalability in large-scale content pipelines.

By Leela Kumili

© 2026 Created by Michael Levin.

Powered by

![]()

You need to be a member of Codetown to add comments!

Join Codetown