Codetown

Codetown ::: a software developer's community

Meeting Mycroft: An Open AI Platform You Can Order Around By Voice

Mycroft developer Ryan Sipes, speaking from the show floor of this year's OSCON in Austin, Texas...

(see our video interview here), says that what started out as a weekend project to use voice input and some light AI to locate misplaced tools in a makerspace morphed into a much more ambitious, and successfully crowd-funded, project -- hosted at the Lawrence Center for Entrepreneurship in Lawrence, Kansas -- when he and his fellow developers realized that the state of speech recognition and interfaces to exploit it were in a much more rudimentary state than they initially assumed.

How ambitious? Mycroft bills itself as "an open hardware artificial intelligence platform"; the goal is to allow you to "interact with everything in your house, and interact with all your data, through your voice." That's a familiar aim of late, but mostly from a shortlist of the biggest names in technology. Apple's Siri is exclusive to (and helps sell) Apple hardware; Google's voice interface likewise sells Android phones and tablets, and helps round out Google's apps-and-interfaces-for-everything approach. Amazon and Microsoft have poured resources into voice recognition systems, too -- Amazon's Echo, running the company's Alexa voice service, is probably the most direct parallel to the Mycroft system that was on display at OSCON, in that it provides a dedicated box loaded with mics and a speaker system for 2-way voice interaction.

The Mycroft system, though, is based on two of the first names in open hardware -- Raspberry Pi and Arduino -- and it's meant to be and stay open; all of its software is released under GPL v3. The initial hardware for Mycroft includes RCA ports, as well as an ethernet jack, 4 USB ports, HDMI, and dozens of addressable LEDs that form Mycroft's "face." That HDMI output might not be immediately useful, but Sipes points out that the the hardware is powerful enough to play Netflix films, or multimedia software like Kodi, and to control them by voice. Unusually for a consumer device, even one aimed at hardware hackers, Mycroft also includes an accessible ribbon-cable port, for users who'd like to hook up a camera or some other peripheral. Two other "ports" (of a sort) might appeal to just those kind of users, too: if you pop out the plugs emblazoned with the OSI Open Hardware logo, two holes on each side of Mycroft's case facilitate adding it to a robot body or other mounting system.

The open-source difference in Mycroft isn't just in the hacker-friendly hardware. The real star of the show is the software (Despite the hardware on offer, "We're a software company," says Sipes), and that's proudly open as well. The Python-based project is drawing on, and creating, open source back-end tools, but not tied to any particular back-end for interpreting or acting on the voice input it receives. The team has open sourced several tools so far: the Adapt intent parser, text-to-speech engine Mimic (based on a fork of CMU's Flite), and open speech-to-text engine OpenSTT.

The commercial projects named above (Siri, et al) may offer various degrees of privacy or extensibility, but ultimately they all come from "large companies that work really hard to mine your data" and to keep each user in a silo, says Sipes. By contrast, "We're like Switzerland." With Mycroft the speech recognition and speech synthesis tools are swappable, and there's an active dev community adding new voice-activated capabilities ("skills") to the system.

And if you can program Python, your idea could be next.

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

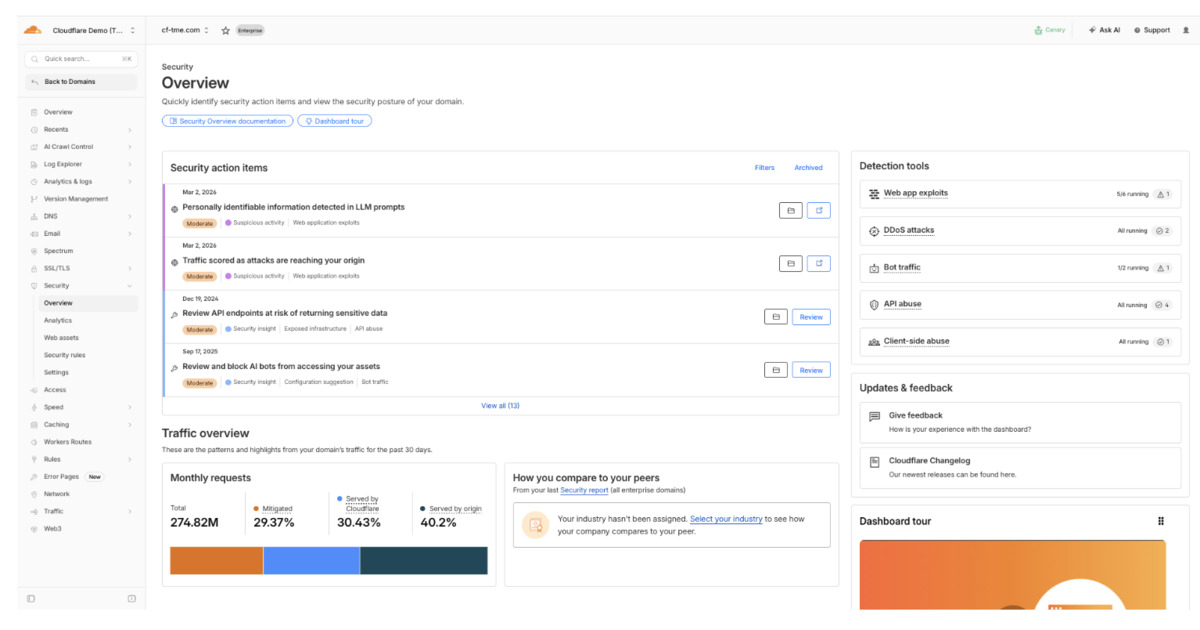

Cloudflare Processes 10M+ Daily Insights with New Security Overview Dashboard

Cloudflare has launched a Security Overview dashboard that consolidates security signals into prioritized action items. It surfaces millions of daily insights, helping teams identify and remediate critical risks faster. Built on distributed checkers and real-time event processing, it integrates analytics workflows to reduce investigation overhead and improve response efficiency.

By Leela KumiliPresentation: The Human Scalability Problem: Why Your Teams Don’t Scale Like Your Code

Charlotte de Jong Schouwenburg discusses the "human bottlenecks" of hyper-growth. While systems scale, human cooperation often breaks down due to communication overload and lost context. She shares proven tools for behavioral scalability - including communication architecture and "engineering trust" - to help leaders maintain high-performing, autonomous teams without sacrificing speed or culture.

By Charlotte de Jong SchouwenburgArticle: From Batch to Micro-Batch Streaming: Lessons Learned the Hard Way in a Delta Index Pipeline

This article describes how a production delta-index pipeline migrated from scheduled batch to micro-batch Spark Structured Streaming. It covers why record-level streaming was rejected, how partition-based watermarks replaced fragile S3 completion markers, overlap-window correctness, and restart-as-design strategies for better predictability in object-store–based ingestion systems.

By Parveen SainiPodcast: Roq: Leveraging Quarkus to Build Static Sites at the Speed of Go

Andy Damevin, a developer who worked on Quarkus for almost a decade, talks about Roq. A project that started as an experiment to try to see if it’s possible to build a static web site generator on top of quarkus. He touches on the rationale for choosing Java and Quarkus, how to migrate to Roq, and the platform's future.

By Andy DamevinDoorDash Used Copilot to Convert Its XCTest-Based iOS Test Suite to Swift Testing

Using Copilot along with strong reliability safeguards, DoorDash migrated their iOS XCTest-based test suite to Swift Testing, thus modernizing a large test suite quickly, safely, and with measurable performance gains, says DoorDash engineer Matheus Gois.

By Sergio De Simone

© 2026 Created by Michael Levin.

Powered by

![]()

You need to be a member of Codetown to add comments!

Join Codetown