Codetown

Codetown ::: a software developer's community

What is Computational Linguistics?

Tags:

Replies to This Discussion

-

Permalink Reply by Jim White on November 22, 2011 at 11:01pm

-

Commercial applications of computational linguistics have been growing by leaps and bounds. IBM created a new poster child for the field with their Jeopardy champion Watson, and most everyone has used Google Translate and/or Voice by now (and fewer yet will have escaped interacting with an Automated Voice Response system). Commercial segments making significant use of NLP include web marketing, medicine, biomedical research, finance, law, and customer call centers.

In the most general terms, computational linguistics is applying computational methods to problems in linguistics. Linguistics then is the study of human language in all its aspects. Although it hasn't received a lot of press until recently, computational linguistics has been around pretty much since the development of the computer. One of the first uses of digital computers (and a key impetus for their development) was in code breaking, which is an application of "compling" (I know, it looks like a typo for "compiling" but CL looks like Common LISP to me). The Association for Computational Linguistics (ACL), the largest and oldest scientific and profssional society in the field, will hold its 50th annual conference next July.

Being such a broad field there are of course many specializations and various communities with differing objectives and vocabularies. Folks primarily focused on engineering computer systems that process human language at a level deeper than simply character strings are generally under the Natural Language Processing (NLP) banner. The commercial success of CL applications and the focus on some problems other than the traditional NLP ones (translation, text understanding, and speech recognition and generation) has spawned Text Analytics as another subfield that is closely associated with Business Intelligence (itself largely commercial application of machine learning methods). Perhaps the biggest focus of Text Analytics has been on sentiment analysis, which assesses a speaker's attitude or mood in something they've said or written (we usually say "speaker" even when the medium is written or typed). There are many businesses that use sentiment analysis on the web to find out what folks are saying about them and their products, in call centers for quality control, and in finance to predict future prices. Applications in law and government include "e-discovery" and smart OCR systems. Lastly, and far from leastly, is compling in the medical field and the specialized domain knowledge it calls for which is known as bioinformatics. Bioinformatics may well be compling's "killer app" because of the tremendous opportunity to do good. Answering technical questions for medical practitioners is the application IBM has targeted as Watson's "day job".

This is a big topic and I have lots I would like to say, so I intend to drop in here often. So stay tuned!~~~~ Jim White

-

-

Permalink Reply by John Considine on November 23, 2011 at 10:01am

-

On a similar note: This fall a colleague of mine informed me of a class being offered by two Standford professors. It is Introduction to Artifical Intelligence (ai-class.com). I signed up for the course along with 138,000 other students. It has been interesting to see some of the therories and formulas used to help the computer find the correct answer. Prior to starting the class, I did not realize it is mostly related to statistics. I just finished the midterm and will hopfully learn alot more as the course continues.

-

-

Permalink Reply by Eric Lavigne on November 25, 2011 at 12:33pm

-

Next semester Stanford is offering a wider variety of online courses. One of them is specifically on natural language processing:

There are also some other courses that would make good follow-ups to the AI course.

Machine learning: http://jan2012.ml-class.org/

Probabilistic graphical models: http://www.pgm-class.org/ (These are the kinds of graphs you saw in the AI course - not about pictures)

Game theory: http://www.game-theory-class.org/

-

-

Permalink Reply by Michael Levin on November 25, 2011 at 12:53pm

-

Excellent. Thanks, Eric!

Eric Lavigne said:Next semester Stanford is offering a wider variety of online courses. One of them is specifically on natural language processing:

There are also some other courses that would make good follow-ups to the AI course.

Machine learning: http://jan2012.ml-class.org/

Probabilistic graphical models: http://www.pgm-class.org/ (These are the kinds of graphs you saw in the AI course - not about pictures)

Game theory: http://www.game-theory-class.org/

-

Notes

Welcome to Codetown!

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Codetown is a social network. It's got blogs, forums, groups, personal pages and more! You might think of Codetown as a funky camper van with lots of compartments for your stuff and a great multimedia system, too! Best of all, Codetown has room for all of your friends.

Created by Michael Levin Dec 18, 2008 at 6:56pm. Last updated by Michael Levin May 4, 2018.

Looking for Jobs or Staff?

Check out the Codetown Jobs group.

InfoQ Reading List

Anthropic Launches Claude Platform on AWS

Anthropic has announced the general availability of Claude Platform on AWS, a new deployment option that gives AWS customers direct access to Anthropic’s native Claude platform using AWS authentication, billing, and monitoring services.

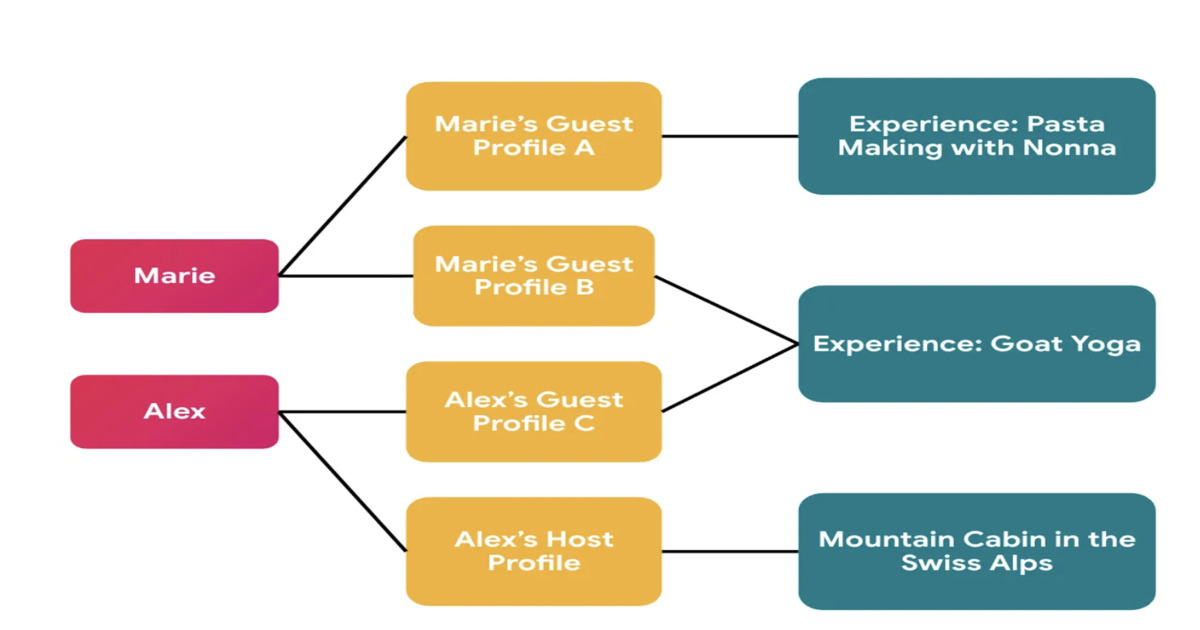

By Daniel DominguezAirbnb Implements Context-Aware Identity Model to Support Privacy-First Social Features

Airbnb has redesigned its identity system to support privacy-first social features in Experiences. The platform introduces context-specific profiles that separate global user identity from externally visible profiles, preventing cross-context linkage. The migration leveraged automated auditing, manual validation, and AI-assisted refactoring to enforce correct identity usage across services.

By Leela KumiliJEP 533 Tightens Exception Handling in Java's Structured Concurrency for JDK 27

JEP 533, Structured Concurrency, has reached integrated status for JDK 27. It refines exception handling and type safety in its API, particularly focusing on exception flow with a new ExecutionException type. Changes include an updated Joiner interface and a new open overload for easier configuration. The steady evolution signals ongoing development as feedback shapes the API.

By A N M Bazlur RahmanPresentation: What I Learned Building Multi-Agent Systems From Scratch

Paulo Arruda discusses Shopify’s evolution in AI adoption, moving from simple chat tools to a sophisticated swarm of specialized agents. He explains the transition from massive "all-in-one" prompts to lean, narrow-focused agent microservices that slash task times from hours to minutes. He also shares a future-looking hypothesis on using filesystem-based adapters to solve context bloat.

By Paulo ArrudaArticle: The Mathematics of Backlogs: Capacity Planning for Queue Recovery

Backlogs in distributed systems are arithmetic problems, not mysteries. This article provides practical formulas for calculating backlog drain time, sizing consumer headroom, and setting auto-scaling triggers. It covers key failure modes — retry amplification, metastable states, and cascading pipeline bottlenecks — plus when to shed load instead of draining.

By Rajesh Kumar Pandey

© 2026 Created by Michael Levin.

Powered by

![]()